2026 and the Resurrection of the Analog Age

Disclaimer: References to specific companies, platforms, products, or aesthetics in this article are included for observational and analytical purposes only. They are not intended as endorsements, recommendations, or criticisms. The examples are used solely to illustrate broader cultural, behavioral, and design signals discussed throughout the piece.

There is now a quiet discomfort that shows up when we open our phones without knowing why, when we scroll without remembering what we saw, when hours dissolve into feeds that promise connection but deliver very little of it.

For many people, this isn’t only about being “online too much.” It’s about feeling led by screens rather than choosing them. About stimulation without satisfaction. Presence without memory. A sense of emotional numbness that’s hard to name, but easy to recognise.

This feeling has been growing for years. Psychologists and public health bodies now openly describe behaviours like doomscrolling and media overload as patterns linked to stress, anxiety, and emotional fatigue (American Psychological Association).

Doomscrolling

Doomscrolling is not about seeking information. It’s about staying in motion when stopping feels uncomfortable. This behavioral pattern shaped less by choice and more by interface design.

And now, in 2026, something begins to shift.

We see people talking about bringing back CD players and cassettes, choosing physical media again, or intentionally setting days with no screens at all. When we look at this more carefully, it does not appear to be nostalgia in the way previous generations experienced it.

It is also not a desire to abandon technology entirely. What seems to be emerging instead is a more deliberate questioning of it. A pause. A re-evaluation. And that questioning is beginning to shape culture, design, art, and everyday behavior in ways that feel distinctly analog.

The age of Analog, version two, is emerging.

Let me show you the landscape.

When the digital world stopped feeling weightless

For a long time, the digital world felt abstract. The cloud lived somewhere far away. Data felt infinite. Everything online appeared immaterial.

But in recent years, that illusion has started to crack.

As generative AI systems scale, the physical reality behind them has become harder to ignore. Data centers, the infrastructure that powers cloud services and AI models, now consume enormous amounts of electricity. According to the International Energy Agency, electricity demand from data centers is expected to grow significantly toward 2026, driven largely by AI workloads and large-scale computing needs (IEA Electricity 2024). In the United States, the Department of Energy has reported that data centers already account for a growing share of national electricity consumption, with projections showing rapid increases as AI adoption accelerates (U.S. Department of Energy)

In some regions, this demand is no longer treated as a technical detail but as public infrastructure. Governments and utilities are openly debating how to expand power grids to support AI data centers and who should ultimately bear those costs, a discussion increasingly reflected in public reporting (Reuters)

Electricity is only part of the story.

Many data centers rely on water-based cooling systems to prevent overheating, often using potable drinking water. Researchers from the University of California, Riverside estimate that training and operating large AI models can require substantial amounts of freshwater, bringing attention to a resource most people never associated with digital products before (“Making AI Less Thirsty,” Li et al.: https://arxiv.org/abs/2304.03271). Environmental and energy organizations have similarly warned that some facilities consume water at a scale comparable to small municipalities (Environmental and Energy Study Institute).

Suddenly, digital no longer feels weightless.

What once seemed virtual now has land, cables, cooling systems, power stations, and water pipes attached to it. The cloud begins to look less like an abstract idea and more like physical infrastructure. For many people, this realization quietly changes their relationship with technology. When every prompt, stream, or model carries a material cost, digital stops feeling invisible. It becomes something tangible, something shared, something that draws from collective resources. And when technology becomes infrastructure, people begin to question it differently. Not with fear. But with awareness.

When we no longer know what is real

At the same time that the digital world has become heavier, it has also become less certain.

Images can now be generated in seconds. Videos can be convincingly fabricated. Voices can be cloned with unsettling accuracy. What once functioned as proof no longer carries the same weight. This shift is no longer theoretical. In early 2026, tools like Grok, the AI chatbot integrated into X, were widely reported for generating manipulated images of real people without consent, triggering public backlash and regulatory scrutiny (The Verge; Reuters).

At the same time, AI-generated voice cloning has been used in impersonation attempts and harassment cases, pushing regulators to intervene. In the United States, the Federal Communications Commission has declared AI-generated voices in robocalls illegal, acknowledging how easily trust can be exploited when voice itself is no longer reliable (FCC).

For everyday users, these moments accumulate quietly. If a photo can be fabricated and a voice can be synthesized, what does “real” online even mean anymore?

Screens that once documented experience now come with an unspoken question mark. In response, many people begin to value what cannot be generated or edited. Being somewhere matters more. Seeing something with your own eyes regains meaning. Shared moments start to feel safer than shared content. This is not fear of technology. It is a recalibration of trust. And when trust shifts, behavior follows.

Data, consent, and the illusion of control

As uncertainty grows around what we see and hear online, another feeling deepens alongside it: the sense that participation in the digital world is no longer entirely voluntary. Most platforms insist that users are in control of their data. Privacy dashboards exist. Settings are available. Consent is technically possible.Yet in practice, control often feels performative.

Regulators have increasingly described the way digital products are designed as deceptive, pointing to interfaces that nudge users toward sharing more data while making refusal confusing or time-consuming. In a detailed report, the U.S. Federal Trade Commission warned that so-called dark design patterns are widely used to influence user behavior while preserving the appearance of choice (FTC).

This gap between what platforms promise and what users experience slowly erodes trust. Opting out requires effort. Understanding policies requires time. And even when users do adjust settings, there is rarely confidence that those choices meaningfully change what happens behind the scenes.

That tension became more visible as companies expanded the use of personal content to train generative AI systems. In 2024 and 2025, Meta announced plans to use public user data in parts of its AI training pipeline, offering opt-out mechanisms that drew criticism from privacy groups and regulators across Europe (Associated Press; Reuters). For many users, the issue was not only about AI. It was about consent itself. Participation felt assumed. Objection felt exceptional. At the same time, large-scale data breaches continued to expose personal information, reinforcing the sense that once data enters digital systems, it rarely stays contained (U.S. National Public Data breach overview).

None of this produces outrage on its own. Instead, it creates something quieter: resignation.

When people feel that control exists mostly in theory, engagement becomes transactional. Trust becomes conditional. And the desire to reduce exposure grows stronger than the desire to optimize participation. This is where many people begin to step back, not dramatically, but selectively. Not leaving the digital world entirely. Just trying to give it a little less of themselves.

Selective withdrawal and harm reduction

What’s emerging in response to all of this is not rebellion. It is reduction.

Most people are not logging off completely, throwing away their phones, or rejecting technology outright. Instead, they are quietly reshaping their relationship with it. This mindset resembles harm reduction more than resistance. We see it in small but meaningful shifts. Some people replace default tools with alternatives that collect less data. Others limit how many platforms they actively participate in. Many try to reduce algorithmic exposure rather than eliminate digital life entirely.

These behaviors are not driven by idealism. They are responses to accumulated signals.

Over the past few years, large-scale data leaks have made the consequences of digital participation harder to ignore. Investigations by 404 Media and Wired revealed that over 12 thousands of mobile apps were collecting and leaking precise location data, often without users’ clear understanding or consent. Reports documented how this data was routinely sold, shared, or left unsecured, reinforcing the sense that once information enters digital systems, control quickly dissolves.

As awareness grows, withdrawal begins to look less like paranoia and more like pragmatism.

This shift has coincided with the growth of privacy-oriented products. The Brave browser, which positions itself around blocking trackers and reducing surveillance by default, surpassed 100 million monthly active users in 2025, suggesting that a significant number of people are actively choosing tools designed to extract less from them (The Verge).

Alongside this, practices such as “de-Googling” have gained visibility. For some users, this reflects an explicit values-based decision. When company policies, data practices, or ethical positions no longer align with personal beliefs, continued use begins to feel uncomfortable. Leaving becomes a way of withdrawing support, even if the process is gradual and imperfect.

In this context, selective withdrawal is not about achieving purity or control. It is about alignment.

Reducing dependence. Creating distance. Participating more intentionally in systems that increasingly feel difficult to trust.

Example on Analog Year Goals

Here we have Dr. Dr. Avriel Epps from her IG account. She is a computational social scientist and an assistant professor at University of California Riverside.

At the same time, privacy itself has become a structural force shaping digital ecosystems. Apple’s App Tracking Transparency feature significantly limited cross-app tracking, an impact documented by economists and regulators alike (Working paper on ATT impact). The fact that such measures have triggered legal challenges and antitrust scrutiny further reflects how contested data collection has become (Reuters).

What’s important here is not which tools people choose. It’s the intention behind the choice. People know these changes are partial. Using a different browser does not make someone invisible. Turning off a setting does not guarantee protection. But reduction feels possible. Instead of asking “how do I fully escape this system,” people ask “how do I participate less intensely.” That shift in mindset is subtle, but powerful. It reflects a growing desire for agency in environments that increasingly feel extractive. And once people begin reducing digital intensity, something else becomes noticeable. They start looking for experiences that feel fuller elsewhere.

Hardware as a signal, not a comeback

These shifts are not only visible in software and behavior. They also appear, more quietly, in hardware.

Over the past few years, there has been renewed interest in devices that intentionally limit functionality rather than expand it. Minimalist phones, distraction-reduced devices, and communication-first hardware have begun to reappear, often positioned not as replacements for smartphones, but as complements to them. The return of BlackBerry-style devices is a telling example. New Android-based phones featuring physical keyboards have entered the market, explicitly prioritizing messaging and email as core use cases (The Verge :https://www.theverge.com/tech/851298/clicks-communicator-phone-blackberry-keyboard ).

The concept here, the phone is a Communicator and to limit the device to only your essential apps when you want an escape from your main phone

Image: Clicks

What matters here is not market share. These devices remain niche.

What matters is what they represent.

Physical experience as the new value proposition

As people begin to reduce their digital intensity, a parallel shift starts to appear in what they seek instead. Rather than spending more time online, many are looking for ways to use digital tools simply to arrive somewhere else.

We see this in how platforms that focus on events and experiences are being used. Event discovery tools increasingly position themselves as facilitators of attendance rather than destinations for endless browsing. Recent trend reporting shows growing demand for smaller, local, in-person gatherings that prioritize shared experience over scale or virality (Eventbrite Trends Report).

At the same time, there has been a visible return of community-led spaces that exist outside the logic of feeds and followers. Running clubs, book clubs, walking groups, sketch meetups, and informal creative circles have re-emerged as social anchors, offering connection without performance. Cultural and business reporting has described this revival of offline third spaces as a response to the fragmentation and isolation of digital life. What’s interesting is that many of these experiences are not framed as anti-technology. Digital tools are still used to organize, coordinate, and discover. But the value no longer lives in the platform itself. It lives in showing up.

This shift is also influencing how some digital products are designed. Increasing criticism of infinite scroll and engagement-driven interfaces has pushed designers and writers to question whether more time spent is always a success metric. As explored in design and technology commentary, there is growing interest in products that discourage endless consumption and instead support brief, intentional use.

Together, these signals point to a subtle redefinition of value. In a world where content is infinite, experience becomes scarce. And when something becomes scarce, it begins to matter again.

Signals in design, brands, and visual culture

As behaviors shift, design begins to change with them.

Not dramatically. Not all at once. But in small, telling decisions that signal different values beneath the surface.

Across digital products and brand experiences, we start to see a move away from visual perfection and hyper-optimization. Interfaces no longer aim only to be frictionless. Visual systems no longer prioritize endless polish. Instead, texture, authorship, and human presence begin to reappear in spaces that once felt purely functional.

A clear example can be seen in how some luxury and cultural brands approach digital design. In early 2026, Hermès launched an online experience built around hand-crafted illustration rather than AI-generated imagery or photorealistic assets. Design publications described the site as intentionally editorial and human, emphasizing craft, storytelling, and visual imperfection as a form of differentiation (Fast Company; Domus).

What makes this significant is not the aesthetic itself, but what it communicates.

In a moment when generative imagery is abundant, technically impressive, and instantly producible, choosing hand-drawn visuals becomes a statement of authorship. It signals that someone made this. That time was spent. That decisions were intentional. Similar thinking appears in brand identities that foreground imperfect photography, disposable-camera imagery, or hand-rendered elements, using memory and trace as emotional anchors rather than hiding irregularity (Creative Bloq).

Even this come back of Gen X Soft Clubn aesthetic is a telling signal. Some fashion and culture coverage describes Gen X Soft Club as a short-lived, late 90s to early 00s pocket of style that is now being re-labeled and circulated by Gen Z, often positioned as the quieter sibling of mainstream Y2K. (Fashionista explainer) And you can see how this gets translated for social platforms in real time. The TikTok account The Digital Fairy, a UK-based creative agency, has shared breakdowns of the aesthetic, which is less important as a definition and more useful as a signal of how the look is being revived, simplified, and spread.

Gen X Soft Club

“Gen-X" refers to the generational target audience, and "Soft Club" refers to the urbane/gentrified image of club culture it portrays (with the intention of resonating with young adults in the 90s. It’s defined by cool and muted colors, especially blues, greens and grays. Often combined with elements of tech or other references to futurism, and design choices like typefaces and cityscapes. Along with blurred and bleached image effects,

Alongside this, shifts are visible in web design language itself. Monochrome palettes are returning as a way to reduce visual noise. Morphing and fluid systems introduce movement that prioritizes expression over efficiency. Modularity has become more visible, revealing structure rather than hiding it, which may also be, in my opinion, a response to widespread design templates that increased productivity while reducing expression. What matters here is not whether these choices are better or worse design. They are signals.

The same tension becomes even more visible in art.

As generative tools grow more capable, images can now be produced instantly in almost any style. Technical mastery is no longer scarce. And yet, rather than replacing human expression, this abundance appears to have triggered an opposite response.

Across creative communities, there is renewed interest in work that carries visible traces of making. Hand-drawn illustration. Rough typography. Ceramics, printmaking, zines, collage, and physical installations. Art that shows process, hesitation, and time.

This shift is not a rejection of AI-generated art. Many artists continue to experiment with it, and institutions actively exhibit work created with generative systems. But alongside that experimentation, a parallel desire is emerging for work that cannot be instantly reproduced. Design and culture publications have noted a growing emphasis on craft, materiality, and physical making in exhibitions and creative events, framing it as a response to increasingly automated visual culture.

In these works, value does not come from polish. It comes from trace. From brushstrokes, pressure, aging materials, and evidence of time. Where AI creation collapses time into seconds, handmade work expands it. What people seem to be responding to is not whether something looks good, but whether it feels lived. This does not signal a return to tradition out of nostalgia. It reflects a recalibration of meaning. When creation becomes effortless, effort itself becomes expressive. In this context, imperfection is not a flaw. It is evidence. And this renewed attention to human trace mirrors what we see across technology, design, and behavior. A desire to reconnect with things that feel grounded, intentional, and embodied.

What this means for design research and practice

When we step back and look at these signals together, a broader pattern begins to emerge.

What we are seeing is not a rejection of technology, nor a simple return to older formats. It is a response to systems that have become increasingly abstract, automated, and difficult for individuals to reason about.

Environmental cost, synthetic media, data extraction, and design standardization are not isolated issues. They are interconnected outcomes of the same acceleration logic. From a systems thinking perspective, the Analog shift appears less as a trend and more as a form of user adaptation. As complexity increases and visibility decreases, people search for ways to restore legibility. They gravitate toward experiences that feel understandable, bounded, and grounded.

An emerging persona begins to take shape through this lens.

Predominantly observed in the Global North, this user is digitally fluent, not digitally excluded. They understand platforms, algorithms, and automation. Their response is not driven by fear or lack of access, but by saturation. They are not asking for less technology, but for technology that feels interpretable, accountable, and human-scaled. Importantly, this persona emerges within specific conditions: stable infrastructure, high digital penetration, and growing awareness of environmental and data-related externalities.

Whether similar sentiments exist in Arab contexts remains largely unknown.

While the region shares many of the same external pressures, including rising energy demand, water scarcity, and expanding digital infrastructure, there is limited research exploring how people here perceive these trade-offs. We do not yet have strong insight into whether digital fatigue, AI uncertainty, or selective withdrawal manifest in similar ways, or whether cultural, social, and economic differences produce entirely different responses. This gap represents a significant missed opportunity.

For practitioners, these signals carry practical implications.

For research centers, organizations, and businesses operating in the region, the absence of localized insight means decisions are often made using global narratives that may not fully apply. Understanding how people experience trust, technology, presence, and extraction in local contexts is not only academically valuable, but strategically necessary.

For design researchers, they suggest the importance of studying not only moments of engagement, but moments of resistance, avoidance, and reduction. What people stop doing, mute, or move away from can be as informative as what they adopt.

For UX designers and product teams, they challenge assumptions that more interaction always equals more value. Speed, automation, and frictionless flows may improve efficiency while quietly eroding meaning or trust.

For product owners, managers, and business leaders, these patterns invite a broader reframing of value. Growth metrics, engagement time, and automation efficiency capture only part of what people experience. Trust, interpretability, and emotional sustainability are harder to measure, but increasingly central to long-term relevance.

In the next piece, I will explore this landscape more concretely, focusing on how these signals can inform research approaches, strategic framing, and design decision-making. It will be a practical guide for design researchers, UX practitioners, product owners, managers, and founders, including practices and worksheets that teams can use to reflect, assess, and apply these ideas within their own organizations.

This article does not attempt to define a new era or prescribe a direction. It simply maps a landscape.

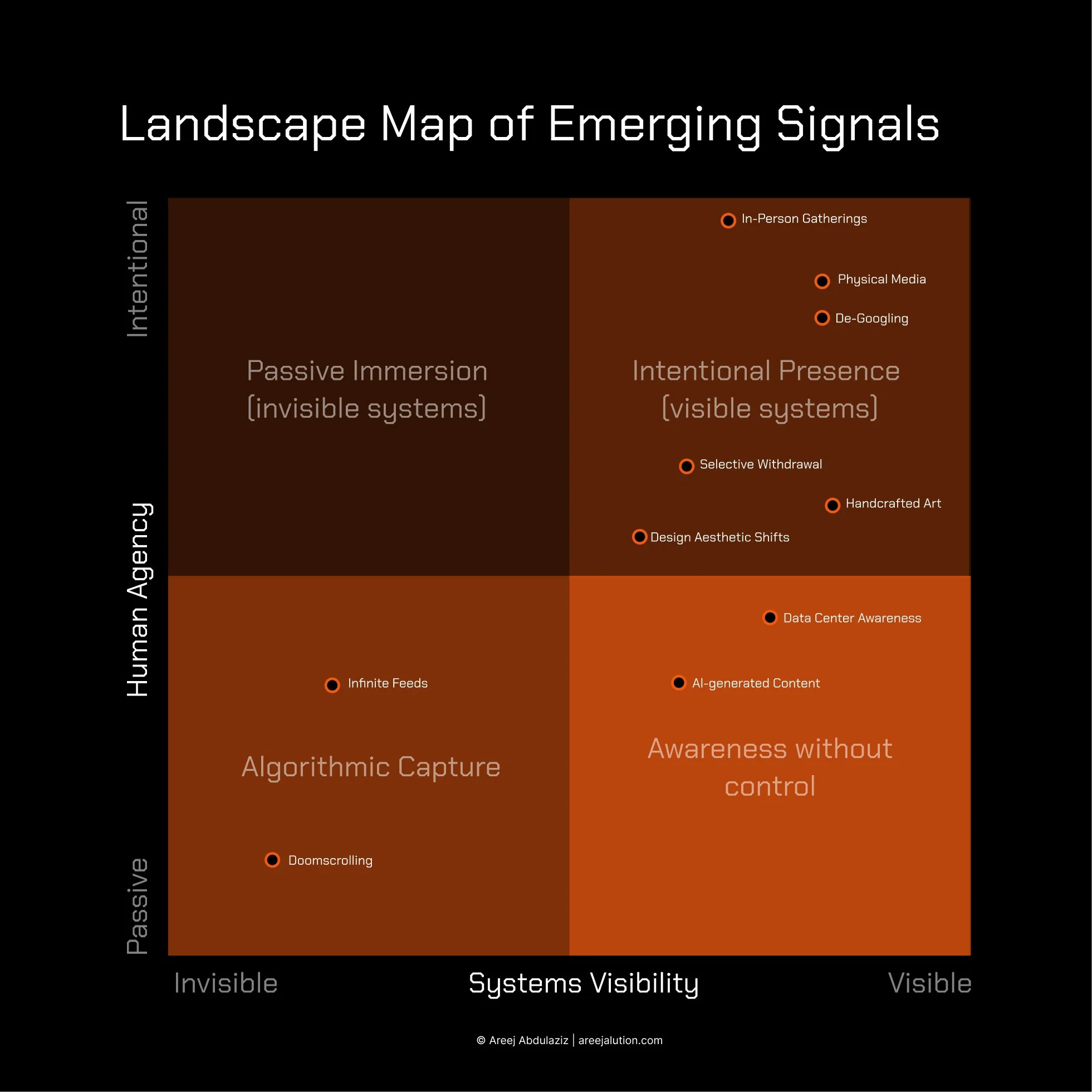

Landscape Map of Emerging Signals

This map visualizes emerging signals rather than dominant behaviors. Positions reflect relationships and tensions, not progress or value judgments.

If you are seeing similar signals in your work, your users, or your organisation, especially in Arab setting I would love to hear how they are showing up for you. The conversation around this moment is still forming, and shared observations can help make it clearer.